Scientific publication in combating an increasingly provocative problem: What do you do about AI in Peer Review?

The ecologist Timothée Poisot recently received a review that Chatgpt clearly generated. The document contained the next distributed word string: “Here is a revised version of your evaluation with improved clarity and structure.”

Poisot was outraged. “I submitted a manuscript to examine, within the hope of receiving comments from my colleagues,” he smoked in a blog post. “If this assumption just isn’t fulfilled, all the social contract of the peer review has disappeared.”

Poisot's experience just isn’t an isolated incident. A Recent study Posted in Nature found that as much as 17% of the reviews for AI conference work in 2023-24 showed signs of a major change by voice models.

And in a separate Nature SurveyAlmost every fifth researcher admitted to using AI to speed up and facilitate the peer review process.

We have also seen some absurd cases that occur when A-generated content slides through the peer review process, which is meant to take care of the standard of research.

In 2024 a Paper published inside the borders It was found that the journal, which examined some highly complex cell signal paths, contained bizarre, nonsensical diagrams created by the AI Art tool Midjourney.

An image showed a deformed rat, while others were only randomly vertebrae and cub, full of crouched text.

Commentators on Twitter were horrified that such obviously incorrect numbers made it through peer review. “Um, how did Figure 1 get on a peer reviewer?!” Asked someone.

There are essentially two risks: a) Peer reviewers who use AI to examine content, and b) with AI-generated content that goes through all the peer review process.

Publishers react to the issues. Elsevier has signed generative AI in Peer Review. Wiley and Springer Nature enable the “limited use” in disclosure. Some control the AI tools, just like the American Institute of Physics, so as to add human feedback -but not replaced.

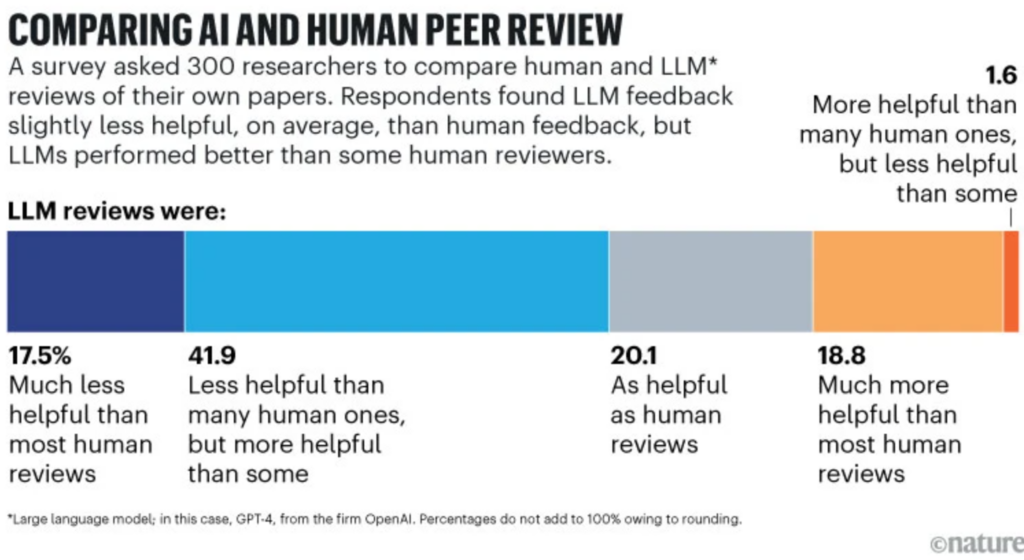

However, ais attraction is powerful and a few see the benefits once they are used rigorously. A Stanford study showed that 40% of the scientists believed that Chatgpt evaluations of their work could possibly be just as helpful and 20% more helpful.

However, the academy has turned around human input for millennies, in order that the resistance is powerful. “Fighting automated reviews implies that we have now given up,” wrote Poisot.

The jumping point of the peer review, many argue, is taken into account feedback from other experts – no algorithmic stamp.