Our ancestors once huddled in small, isolated communities, their faces illuminated by flickering fires.

Evidence of controlled campfires for cooking and heat dates back some 700,000 years, but tons of of years before then, homo erectus had begun to live in small social groups.

At this point, we are able to observe changes within the vocal tract, which indicate primitive types of communication.

This is when early humans began to translate and share their internal states, essentially constructing a primitive worldview by which someone and something existed beyond the self.

These early types of communication and social bonding led to a cascade of changes that thrust human evolution forward, culminating within the formation and dominance of recent humans,

However, archaeological evidence suggests that it wasn’t until the last 20,000 years or in order that humans began to ‘quiet down’ and interact in increasingly complex societal and cultural practices.

Little did early hominids know that the hearth around which they gathered was but a pale reflection of the hearth that burned inside them – the hearth of consciousness illuminating them on the trail to becoming human.

And little did they know that countless generations later, their descendants would find themselves gathered around a special kind of fireside – the intense, electric glow of their screens.

The primal roots of human thought

To understand the character of this primitive mind, we must look to the work of evolutionary psychologists and anthropologists who’ve sought to reconstruct the cognitive world of our distant ancestors.

One of recent evolutionary psychology’s key insights is that the human mind is just not a blank slate but a set of specialised cognitive modules shaped by natural selection to unravel specific adaptive problems.

This is just not exclusive to humans. Darwin’s early research observed that the Galapagos finches, for instance, shared highly specialized beaks that enabled them to occupy different ecological niches.

These varied tools correlated with diverse behaviors. One finch might crack nuts with its large, broad beak, whereas one other might pry berries from a bush using its razor-like bill.

Darwin’s finches indicated the importance of domain specialism in evolution. Source: Wikimedia Commons.

As psychologist Leda Cosmides and her colleagues, including Steven Pinker, have argued in theories now summed as ‘evolutionary psychology,’ the brain’s modules once operated largely independently of each other, each processing domain-specific information.

In the context of primitive history, this modular architecture would have been highly adaptive.

In a world where survival trusted the flexibility to quickly detect and reply to threats and opportunities within the environment, a mind composed of specialised, domain-specific modules would have been more efficient than a general-purpose brain.

Our distant ancestors inhabited this world. It was a world of immediate sensations, primarily unconnected by an overarching narrative or sense of self.

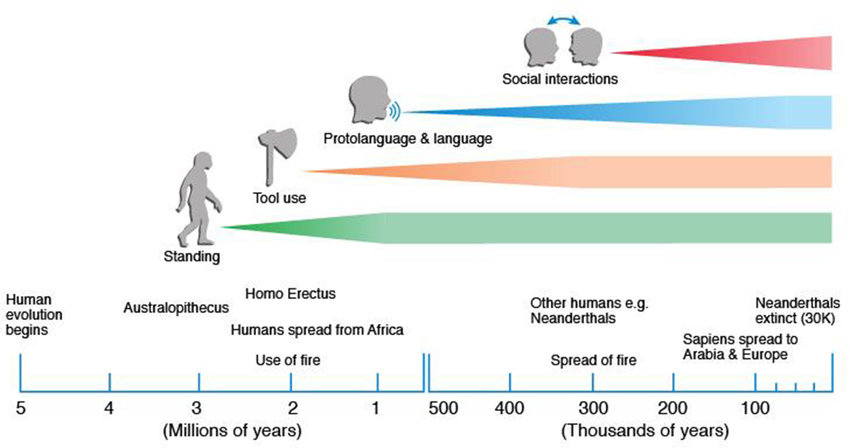

However, over the course of 1000’s of years, hominid brains became more broadly interconnected, enabling tool use, protolanguage, language, and social interaction.

Timeline of human development: Source: ResearchGate.

Timeline of human development: Source: ResearchGate.

Today, we all know that different parts of the brain grow to be heavily integrated from birth. fMRI studies, corresponding to Raichle et al. (2001), show that information is continually shared between various parts of the brain at rest.

While we take this with no consideration and possibly can’t imagine anything, it wasn’t the case for our ancient ancestors.

For example, Holloway’s research (1996) on early hominid brains indicates changes in brain architecture over time supported enhanced integration. Stout and Chaminade (2007) explored how tool-making activities correlate with neural integration, suggesting that constructing tools for various purposes can have driven the event of more advanced neural capability.

The need for complex communication and abstract reasoning increased as humans progressed from small-scale groups where individuals were intimately aware of each other’s experiences to larger groups that included people from varied geographies, backgrounds, and appearances.

Language was perhaps essentially the most powerful catalyst for humanity’s cognitive revolution. It created shared meaning by encoding and transmitting complex ideas and experiences across minds and generations.

Moreover, it conferred a survival advantage. Humans who could efficiently communicate and work with others gained benefits over those less able.

And, gradually, humans began to vocalize and communicate somewhat than for any specific adaptive or survival value.

Entering the age of hyper-personalized realities

In 2016, Mark Zuckerberg strode through an event as attendees donned the Meta 2 headset, the resulting image becoming an iconic forwarding of VR’s perils to isolate people of their personal worlds.

is that this picture an allegory of our future ? the people in a virtual reality with our leaders walking by us. pic.twitter.com/ntTaTN3SdR

VR didn’t take off back then; nonetheless, with the Apple Vision Pro, it’s on the cusp of a brand new era of mass adoption.

Today’s AI and VR technologies can generate highly realistic and context-aware text, images, and 3D models for endlessly personalized immersive environments, characters, and narratives.

In parallel, recent breakthroughs in edge computing and on-device AI processing have enabled VR devices to run sophisticated AI algorithms locally without counting on cloud-based servers.

This embeds real-time, low-latency AI applications into VR environments, corresponding to dynamic object recognition, gesture tracking, and speech interfaces.

VR: is the hype giving technique to reality?

What sets the hyper-personalized realities of the AI and VR age apart is their scope and granularity.

With machine learning, it’s now possible to create virtual worlds that are usually not just superficially tailored to our tastes but fundamentally shaped by our cognitive quirks and idiosyncrasies.

With VR, we’re not only content consumers but energetic participants in our own private realities.

But what are the impacts? Is all of it only a novelty, a wave of hype that’s sure to interrupt as people grow bored of VR as they did a couple of years ago?

We don’t yet know, as VR uptake is certainly picking up pace. While costs remain prohibitive for now, with the Vision Pro costing a cool $3,499, this is predicted to drop sharply.

Indeed, VR has had similar hype moments that dissolved into nothing. According to Bloomberg, Meta invested $50 billion into its Metaverse project, ultimately amounting to one among its biggest failures.

It’s certainly not sensible to claim that everybody will likely be living in VR inside five, ten and even 25 years. However, spotting people in public spaces with the headset on is becoming reasonably common, becoming symbolic of VR’s momentum.

People wouldn’t have dreamed of doing that with a Meta headset. Apple has an incredible opportunity to interrupt down barriers to VR and normalize its use in on a regular basis scenarios.

Plus, Apple‘s timing is favorable. AI doesn’t just support VR performance-wise; it also helps conjure a futuristic world where VR truly belongs.

VR’s impact on the brain

So, what in regards to the impacts of VR? Is it just a visible tonic for the senses, or should we anticipate deeper impacts?

There’s loads of preliminary evidence. For example, a study by Madary and Metzinger (2016) argued that VR may lead to a “lack of perspective,” potentially affecting a person’s sense of self and decision-making processes.

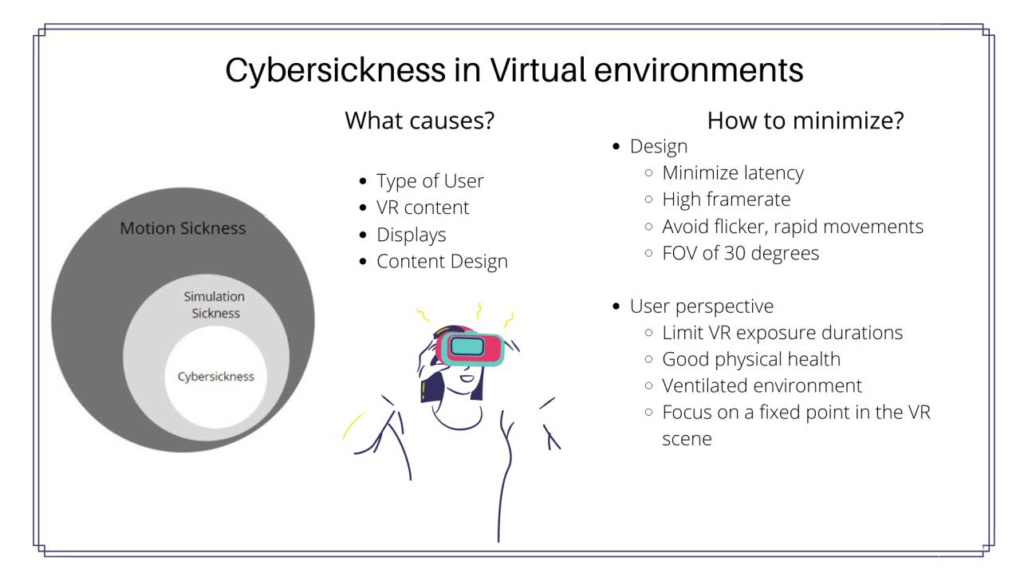

A scientific review by Spiegel (2018) examined VR use’s potential risks and negative effects. The findings suggested that prolonged exposure to VR environments may lead to symptoms corresponding to eye strain, headaches, and nausea, collectively called “cybersickness.”

Cybersickness induced by VR. Source: Chandra, Jamiy, and Reza (2022)

Cybersickness induced by VR. Source: Chandra, Jamiy, and Reza (2022)

Among the stranger impacts of VR, a study by Yee and Bailenson (2007) explored the concept of the “Proteus Effect,” which refers back to the phenomenon where a person’s behavior in a virtual environment is influenced by their avatar’s appearance.

The study found that participants assigned taller avatars exhibited more confident and assertive behavior in subsequent virtual interactions, demonstrating the potential for VR to change behavior and self-perception.

We’re sure to see more psychological and medical research on prolonged VR exposure now the Apple Vision Pro is out.

The positive case for VR

While it’s necessary to acknowledge and address the risks related to VR, it’s equally crucial to acknowledge this technology’s advantages and opportunities.

One of essentially the most promising applications of VR is in education. Immersive virtual environments offer students interactive learning experiences, allowing them to explore complex concepts and phenomena in ways in which traditional teaching methods cannot replicate.

For example, a study by Parong and Mayer (2018) found that students who learned through a VR simulation exhibited higher retention and knowledge transfer than those that learned through a desktop simulation or slideshow. That could possibly be a lifeline for some with learning difficulties or sensory challenges.

VR also holds massive potential within the realm of healthcare, particularly within the areas of therapy and rehabilitation.

For example, a meta-analysis by Fodor et al. (2018) examined the effectiveness of VR interventions for various mental health conditions, including anxiety disorders, phobias, and post-traumatic stress disorder (PTSD).

Another intriguing study by Herrera et al. (2018) investigated the impact of a VR experience designed to advertise empathy toward homeless individuals.

The Apple Vision Pro has already been used to host a personalizable interactive therapy bot named XAIA.

Lead researcher Brennan Spiegel, MD, MSHS, wrote of the therapy bot: “In the Apple Vision Pro, we’re in a position to leverage every pixel of that remarkable resolution and the total spectrum of vivid colours to craft a type of immersive therapy that’s engaging and deeply personal.”

Avoiding the risks of over-immersion

At first glance, the prospect of living in a world of hyper-personalized virtual realities may appear to be the last word success of a dream – a likelihood to finally inhabit a universe that’s perfectly tailored to our own individual needs and desires.

It may additionally be a world we are able to live in endlessly, saving and loading checkpoints as we roam digital environments perpetually.

However, left unchecked, there’s one other side to this ultimate type of autonomy.

The notion of “reality” as a stable and objective ground of experience is determined by a typical perceptual and conceptual framework—a set of shared assumptions, categories, and norms that allow us to speak and coordinate our actions with others.

If we grow to be enveloped in our individualized virtual worlds where each individual inhabits their very own bespoke reality, this common ground might grow to be increasingly fragmented.

When your virtual world radically differs from mine, not only in its surface details but in its deepest ontological and epistemological foundations, mutual understanding and collaboration risk fraying at the perimeters.

That oddly mirrors our distant ancestors’ isolated, individualized worlds.

As humanity spends more time in isolated digital realities, our thoughts, emotions, and behaviors may grow to be more attuned to their very own unique logic and structure.

So, how can we adopt the benefits of next-gen VR without losing sight of our shared humanity?

Vigilance, awareness, and respect will likely be critical. The future will see some who embrace living in VR worlds, augmenting themselves with brain implants and cybernetics, and so forth. It can even see those that reject that favor a more traditional lifestyle.

We must respect each perspectives.

This means being mindful of the algorithms and interfaces that mediate our experience of the world and actively looking for experiences that challenge our assumptions and biases. Hopefully, keeping one foot outside of the virtual world will grow to be intuitive.

So, as we gather across the flickering screens of our digital campfires, allow us to not forget the teachings of our ancestors, the importance of intersubjectivity, and the perils of retreating into isolation.

Beneath the surface of our differences and idiosyncrasies, we share a fundamental cognitive architecture shaped by thousands and thousands of years of evolutionary history.