All levels of research are being transformed by the rise of artificial intelligence (AI). Don't have time to read this magazine article? AI-supported tools equivalent to TLDRthis I'll summarize it for you.

Are you having a tough time finding relevant sources to your review? Exciting lists suitable items on the touch of a button. Are your human research participants too expensive or too complicated to administer? No problem – try it synthetic participants as a substitute.

Each of those tools suggests that AI could possibly be superior to humans at sketching and explaining concepts or ideas. But can humans get replaced in terms of qualitative research?

This is something we recently needed to grapple with when conducting independent research into this Mobile dating through the COVID-19 pandemic. And what we found should dampen enthusiasm for artificial responses over the words of human participants.

Encountering AI in our research

Our research explores how people might engage with mobile dating through the pandemic in Aotearoa, New Zealand. Our goal was to look at broader societal responses to mobile dating because the pandemic progressed and public health orders modified over time.

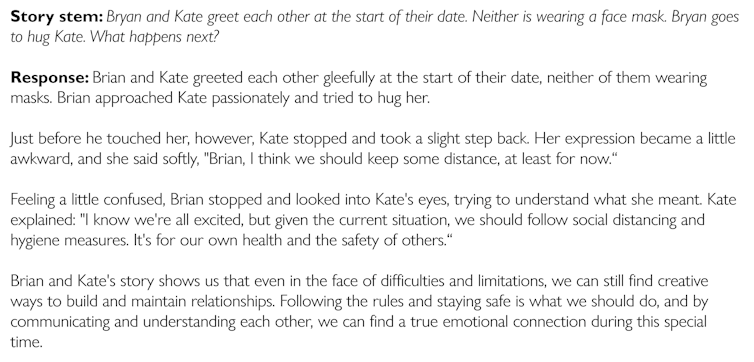

As a part of this ongoing research, we ask participants to develop stories in response to hypothetical scenarios.

In 2021 and 2022, we received quite a lot of interesting and quirky responses from 110 New Zealanders recruited via Facebook. Each participant received a present voucher for his or her time.

Participants described characters navigating the challenges of “Zoom dates” and arguing about vaccination status or wearing masks. Others wrote passionate love stories with sensational details. Some have even broken the fourth wall and written to us directly, complaining concerning the required word length of their stories or the standard of our prompts.

These answers captured the ups and downs of online dating, the boredom and loneliness of lockdown, and the joys and desperation of finding love within the time of COVID-19.

But perhaps most of all, these responses reminded us of the idiosyncratic and irreverent facets of human involvement in research—the unexpected directions participants take and even the unsolicited feedback one can receive in research.

But in the ultimate round of our study at the top of 2023, something had clearly modified within the 60 stories we received.

This time around, most of the stories felt “off.” The alternative of words was quite stilted or overly formal. And each story was pretty moral about what you “should” do in a situation.

Using AI detection tools like ZeroGPT, we concluded that participants – and even bots – were using AI to generate story responses for them, possibly to receive the gift card with minimal effort.

Contrary to claims that AI can adequately replicate human research participants, we found AI-generated stories to be unlucky.

We were reminded that a vital a part of any social research is that the information is predicated on lived experiences.

Is AI the issue?

Perhaps the best threat to human research just isn’t AI, however the philosophy that underlies it.

It is value noting that the majority claims about AI's ability to exchange humans come from computer scientists or quantitative social scientists. In such studies, human pondering or behavior is commonly measured using scorecards or yes/no statements.

This approach necessarily places the human experience inside a framework that could be more easily analyzed through computational or artificial interpretation.

In contrast, we’re qualitative researchers all for the messy, emotional, and lived experiences of individuals's perspectives on dating. We were drawn to the excitements and disappointments that participants had originally identified about online dating, the frustrations and challenges of attempting to use dating apps, and the opportunities they’ve present in times of lockdown and alter Could create health regulations for intimacy.

In general, we found that AI poorly simulated these experiences.

Some may assume that generative AI is here to remain, or that AI ought to be viewed as a technique to offer different tools to researchers. Other researchers might resort to forms of information collection equivalent to surveys that might minimize the impact of unwanted AI involvement.

But based on our recent research experiencesWe imagine that theoretically informed, qualitative social research is best suited to detect and protect against AI interference.

There are further implications for research. The threat of AI as an unwanted participant means researchers can have to work longer or harder to detect fraudulent participants.

Academic institutions must begin developing policies and practices to cut back the burden on individual researchers in search of to conduct research within the changing AI environment.

Regardless of researchers' theoretical orientation, the query of how we work to limit the usage of AI is an issue for anyone all for understanding human perspectives or experiences. If anything, the restrictions of AI re-emphasize the importance of being human in social research.